VMs and Containers: Scale up vs. Scale Out

Originally published on: https://developer.ibm.com/articles/scale-up-and-scale-out-vms-vs-containers/

To increase computing throughput, you can scale up or scale out. In this article, we discuss how the definition of scaling up and scaling out have evolved from its on-premises and virtual machine based definition to our current cloud-native, container-based world.

First, we’ll define the terms by using a non-technical example: laundry. Let’s say you have one washing machine and you want to wash more clothes. How can you do this? By scaling up or scaling out.

-

Scale up: Buy a larger washing machine, allowing you to wash more clothes per load.

-

Scale out: Buy a second washing washine, which allows you to wash more clothes in the same time.

For the purposes of this article, the term scaling up is synonymous with vertical scaling and scaling out is synonymous with horizontal scaling.

Both approaches increase the throughput of laundry you can do. We can apply these same principles to running applications. Let’s look at how scaling is done with physical servers or virtual machines (VM) first, and then we’ll compare it with how it works in containers.

Scaling machines - virtual and physicalPermalink

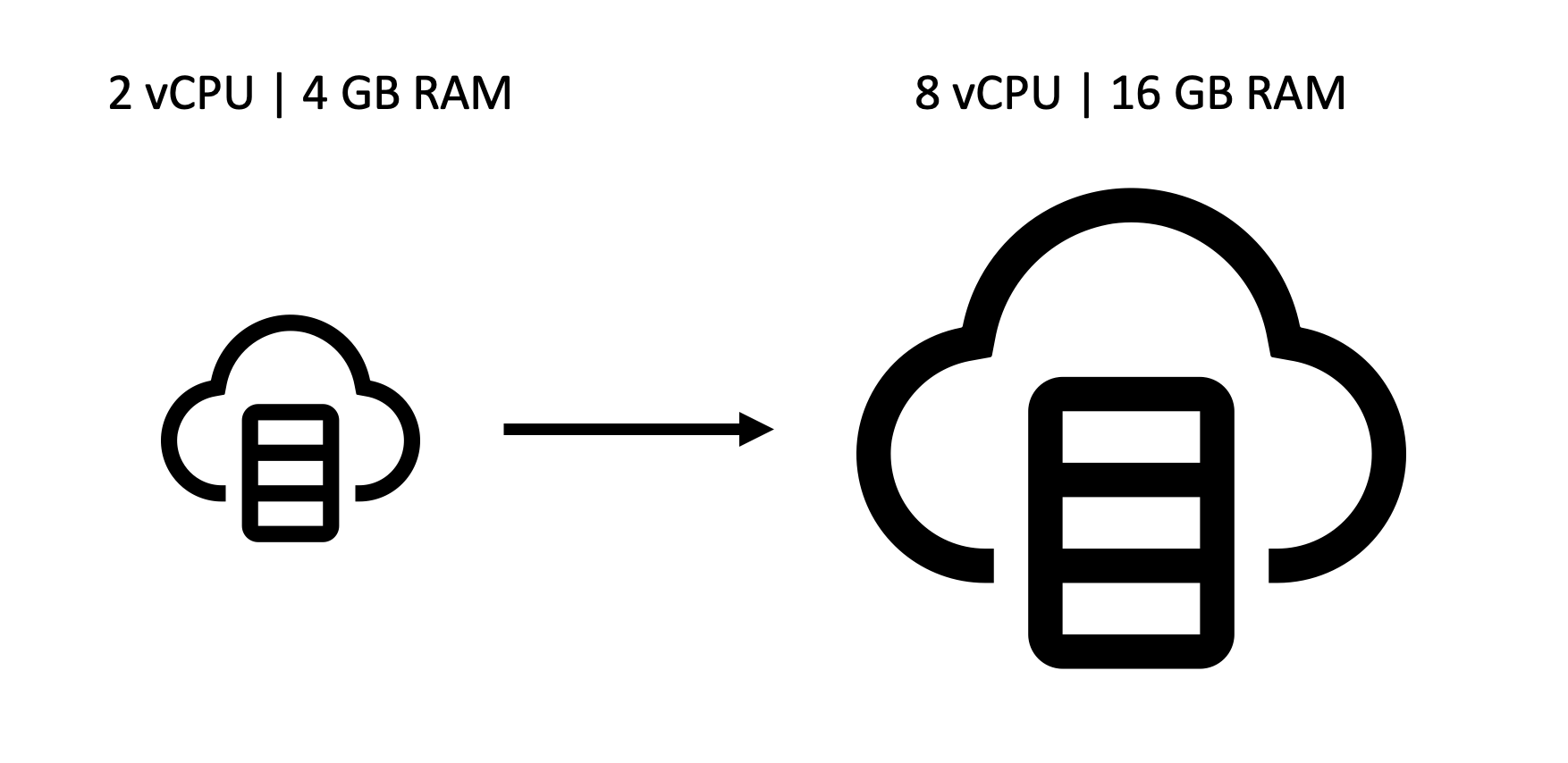

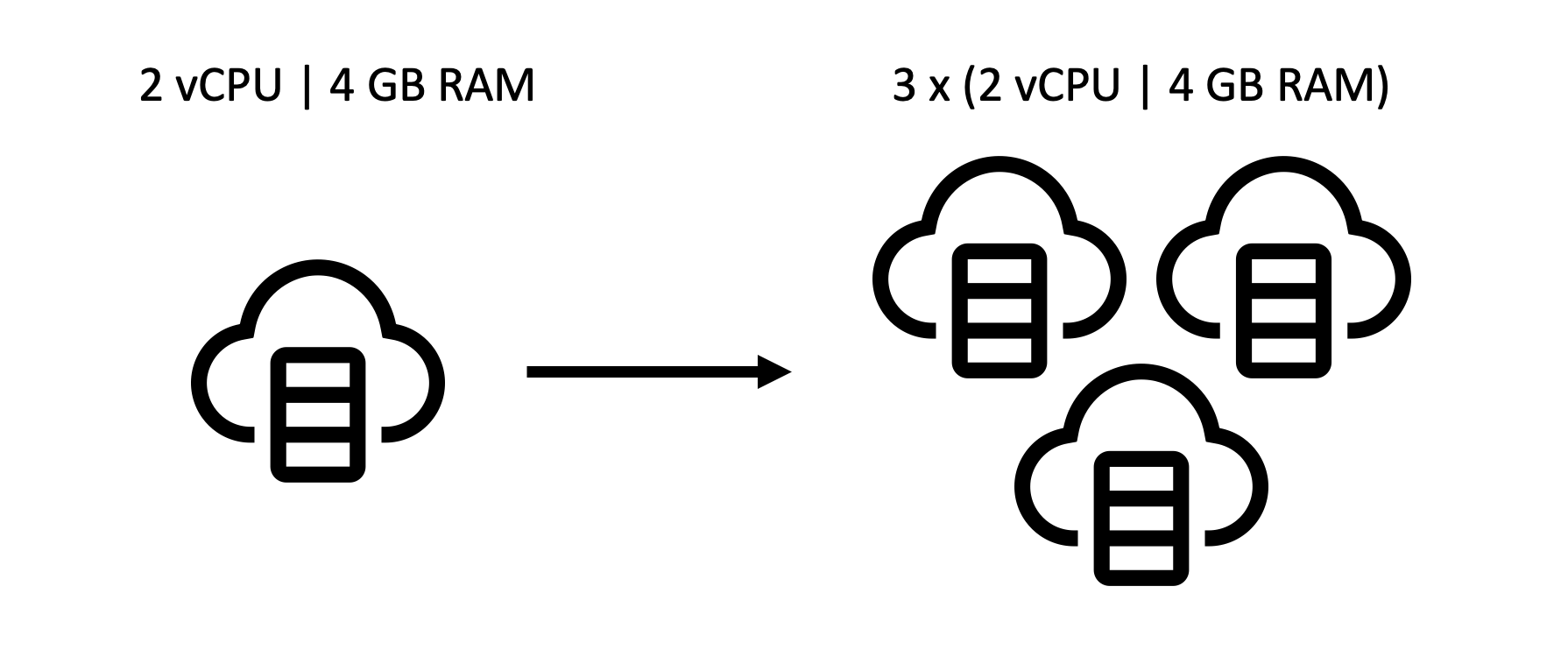

If you have applications or workloads running on a VM or a logical partition, either on-premises or on a cloud, that’s running into out-of-memory (OOM) issues or slow performance, you can either scale up or out to resolve the issue:

Scale up (Vertical scaling)Permalink

As easy solution to this problem would be to increase the CPU and RAM on the machine/partition. This has the benefit of not needing to purchase additional servers. In some cases this approach may mean that your application will have some downtime while your VM is resized.

Scale out (Horizontal scaling)Permalink

To scale out, you could provision additional VMs to help spread the workload around. Of course if you don’t have the computing capacity to spread out to, you’ll have to purchase additional servers. Either way, the downside to this approach is that your enviornment will be more complicated and you’ll need to configure load balancers and databases appropriately.

Scaling containersPermalink

Kubernetes and its many distributions, like OpenShift, are being deployed to enable the automatic scaling of container-based applications. So, how can you scale a containerized application on Kubernetes?

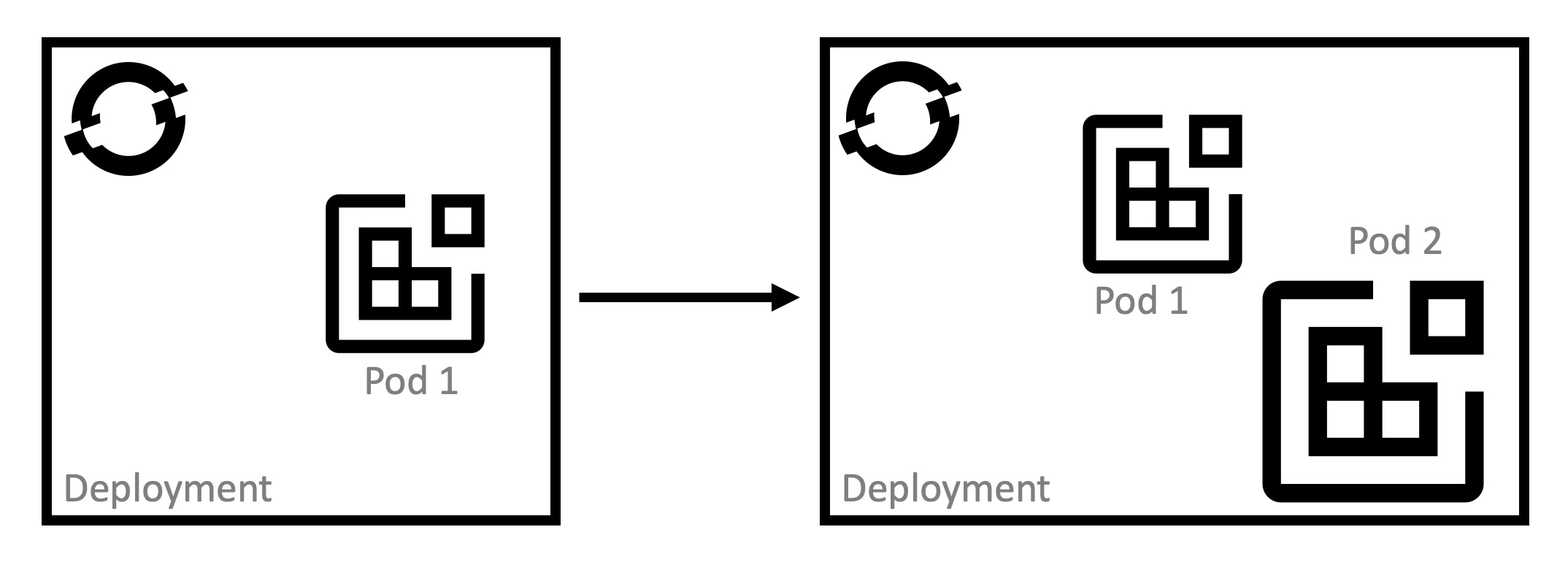

Scale up (Vertical scaling)Permalink

This approach would mean allowing some pods to use more resources than others. Vertical scaling in this context is more suitable for stateful applications where requests need to be handled by a particular pod. Stateless applications don’t have this requirement, so it is easier to use horizontal scaling and you gain the benefit of having each pod defined the same way.

Luckily, an autoscaler exists just for this reason. For more info, check out the Automatically adjust pod resource levels with the vertical pod autoscaler section of the OpenShift documentation.

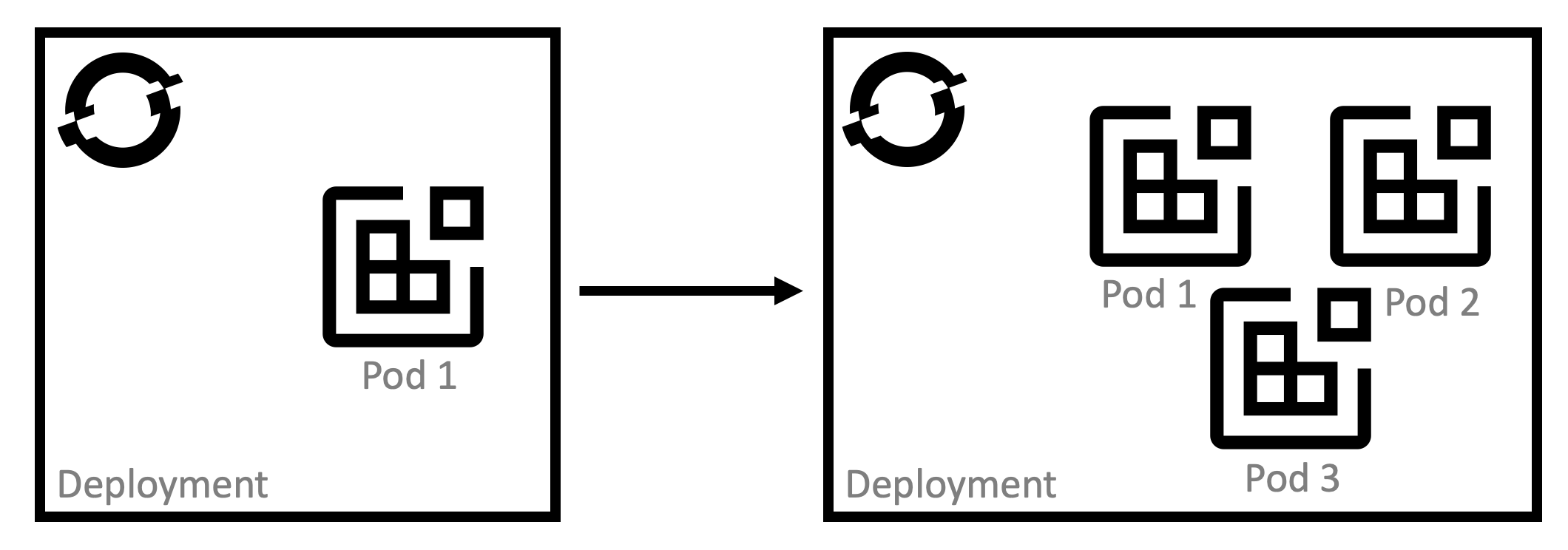

Scale out (Horizontal scaling)Permalink

Horizontal scaling is what Kubernetes does best. It’s what it was designed to do! Pods running in a Kubernetes deployment can be replicated effortlessly, giving you horizonal scaling in seconds. Kubernetes’ built-in load balancer makes configuration seamless. With the horizontal pod autoscaler (HPA) you can specify the minimum and maximum number of pods you want to run, as well as the CPU utilization or memory utilization your pods should target.

For more info, check out the Automatically scaling pods with the horizontal pod autoscaler section of the OpenShift documentation.

So, what should I use?Permalink

Well, it depends.

Scaling up will continue to be a great approach for applications that must be co-located due to latency or legal requirements. Think data-intensive processes or those mission critical workloads needing the highest levels of security and data privacy. This is also a good choice for applications that aren’t containerized. IBM Z’s hardware in particular is well suited for this scenario and can run up to 19 billion encrypted transactions a day.

Scaling out will continue to be the go-to approach for cloud-native applications. Kubernetes is here to stay, and horizonal scaling is one of the platform’s strongest features. Using this model without an orchestrator like Kubernetes quickly becomes difficult to manage mannually, so if you’re considering scaling out you should strongly consider it to avoid problems later in implementation.

ResourcesPermalink

- IBM Z content on IBM Developer

- Kubernetes Autoscaling 101: Cluster Autoscaler, Horizontal Pod Autoscaler, and Vertical Pod Autoscaler

- OpenShift Documentation: Automatically adjust pod resource levels with the vertical pod autoscaler

- OpenShift Documentation: Automatically scaling pods with the horizontal pod autoscaler

- Turbonomic: Cloud Scalability: Scale Up vs Scale Out

- Iconography from IBM Design